New York, NY, April 24, 2026 — As more people turn to generative AI tools to manage stress, anxiety, and other mental health concerns, Grow Therapy (Grow) is introducing a new approach designed to keep licensed clinicians at the center of care. The company today announced the launch of an AI-powered coach (Coach), a chat feature embedded within its app that is intended to support clients between therapy sessions, with proprietary safety features developed and monitored by licensed clinicians.

“Most people spend about an hour each week with their provider,” said Alan Ni, Chief Technology Officer and Co-founder of Grow Therapy. “At Grow Therapy, we’ve been exploring what support can look like during the other 167 hours – between sessions, outside care hours, and in the everyday moments when people are applying what they’ve learned. AI is reshaping how we care for our physical, mental, and emotional health. In mental healthcare, that change must be approached thoughtfully, with licensed professionals guiding how these tools are used and human empathy remaining at the core.”

Adoption of general-purpose chatbots for mental health support has outpaced safeguards, leading to widely reported instances of serious harm. Yet generative AI holds real promise in mental health care, extending support beyond the limits of clinician availability, between sessions and outside care hours. Grow’s purpose-built AI coach captures that potential while preserving professional oversight and risk detection.

“As more people turn to AI tools for mental health support, we’ve seen a need for an approach that keeps providers involved in the process,” said Kevin M. Ramotar, Psy.D., CPHQ, Director of Clinical Product and AI. “Our AI coach was built to offer structured support between sessions, with clear boundaries, multiple safety layers, and clinician oversight. We designed it around what providers told us they needed: transparency, control, and technology that supports care without replacing clinical judgment. It helps clients stay engaged between sessions while giving providers greater visibility to drive better care experiences.”

Effective support before, during, and after the session

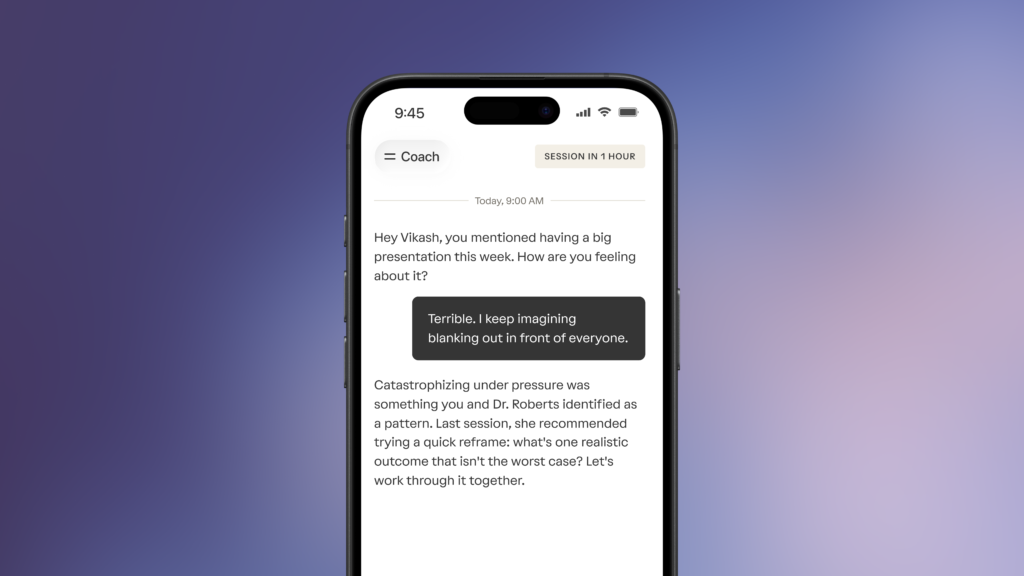

The AI coach is a nonjudgmental space intentionally designed for mental health that can be used to practice new skills, reflect, and process emotions between therapy appointments. It is not intended to diagnose conditions or provide treatment. Instead, it is a supplemental and optional tool to help clients stay engaged between appointments and extend their care. Literature shows that practice of the methods learned in therapy improves the outcomes of mental health care, and Grow’s theory is that continuous support will help clients get the most out of the relationship with their therapist.

The chat capability draws from evidence-based mental health frameworks, including techniques commonly used in cognitive behavioral therapy (CBT), dialectical behavior therapy (DBT), acceptance and commitment therapy (ACT), and behavioral activation (BA). Clients can use it to work through thoughts, practice coping strategies, and flag topics to discuss with their provider at their next session. It also strengthens the bridge between sessions by reinforcing goals, whether that means following through on therapeutic homework, setting boundaries, or staying accountable.

The AI coach has been introduced gradually across Grow’s network, with the company monitoring usage, quality, and safety as access expands. Since the testing phase began in December 2025, 50% of active providers have at least one client using it, and more than 800,000 messages have been sent. Early usage patterns from its phased national rollout suggest people are primarily using the tool for:

- Relationship navigation, accounting for 20% of all conversations.

- Skill practice applying therapy techniques.

- In-the-moment support, such as managing disruptions.

- Emotional articulation, particularly when not yet ready to raise a topic with their provider.

- Thought organization and session prep, solving the “cold start” problem of not knowing what to bring up with their therapist.

For providers, once they have been introduced to the AI coach, most say they would recommend it to their clients. This is especially meaningful at a time when AI is poised to reshape their profession. It also addresses a major blind spot: When clients turn to general-purpose chatbots, providers have no visibility into what is being discussed. By embedding chat-based support built for mental health directly into the care delivery platform, the AI coach keeps providers informed so they can step in and take action when appropriate.

“Between sessions, [I encourage clients to] spend time reflecting on whatever we have talked about,” Lynn Whalen, a licensed clinical social worker based in Minnesota, said. She has found it to be particularly effective with patients who struggle with task initiation, procrastination, or perfectionism. “I explain what [Coach] is and what it isn’t,” added Whalen. “And one of the things I let them know is that this is not the resource that you go to if you’re feeling unsafe or you’re feeling like you want to hurt yourself.”

Built-in safety controls and provider oversight

Safety is central to how the AI coach works.

“We wanted to make sure we put safety first and weren’t just relying on one layer of protection. So we built multiple independent safety layers, automated quality scoring of every conversation, and ongoing review by a team of licensed clinicians,” said Matt Scult, Ph.D., Principal of Clinical AI.

Once the safety guardrails are activated, the following evaluation happens:

- Automatically pauses conversations and shares appropriate crisis or support resources.

- Keeps providers in the loop with specific alerts if a potential safety concern is detected, allowing them to use their clinical discretion on next steps.

- A clinical quality team composed of licensed clinicians regularly audits the safety flags (and random samples) for continuous quality improvement. In quality assurance review to date, the safety system has correctly identified 99% of potential safety concerns in relevant conversations.

Grow forms an AI advisory panel

Grow believes independent experts serving as accountability partners are critical to setting and maintaining a high bar for responsible AI in mental health care. To support that commitment, the company established an external Advisory Panel to help inform the ongoing oversight of the AI coach. The panel brings together expertise spanning psychology, clinical AI research, mental health epidemiology, ethics, and consumer insights, providing interdisciplinary guidance grounded in both scientific rigor and compassion for the people Grow serves. Their counsel supports Grow’s approach to safety, evaluation, and continuous improvement as the AI coach evolves.

AI advisors’ Professional Experience

Jacinta M. Jiménez, Psy.D, Clinical Psychology

Dr. Jacinta M. Jiménez is a Stanford-trained licensed clinical psychologist, board-certified coach (BCC), and leadership strategist whose work bridges behavioral science, technology, and human resilience. For more than 15 years, she has designed evidence-based frameworks that help people and organizations thrive under pressure while staying grounded in ethics and purpose. As Vice President of Coaching Innovation at BetterUp, Dr. Jiménez played a key role in architecting and scaling the company from its earliest stages into a global leader in digital coaching, serving Fortune 500 enterprises worldwide. There, she founded and chaired the company’s first Ethics Committee, establishing governance standards for the responsible use of behavioral science and technology.

Earlier in her career, she contributed to the development of one of the first clinically informed mobile mental-health apps for the National Center for PTSD. An award-winning author of The Burnout Fix (McGraw-Hill), her work has been featured in Harvard Business Review, Fast Company, and Business Insider. She has spoken at NASA, Google, and Columbia University’s Zuckerman Institute, equipping leaders with tools for ethical and resilient leadership.Dr. Jiménez also serves on the Executive Board of Abroad.io and advises organizations on building human-centered systems that advance discernment, adaptability, and connection in the age of AI.

Ethan Goh, MD, Clinical AI Research and Scientific Evaluation

Dr. Ethan Goh is Executive Director at ARISE, a Stanford-Harvard research network focused on how frontier AI models are safely translated into real world care. His research is supported by the Macy Foundation and the Gordon & Betty Moore Foundation, and has been covered in The New York Times, The Washington Post, and CNN.

Dr. Goh is a Founding Editorial Board member and Associate Editor at BMJ Digital Health & AI, and an external reviewer for multi-year research grants.

Walter Sinnott-Armstrong, PhD, Ethics Scholar

Walter Sinnott-Armstrong is Chauncey Stillman Professor of Practical Ethics in the Department of Philosophy and the Kenan Institute for Ethics at Duke University. He has secondary appointments in the Law School and the Department of Psychology and Neuroscience, and he is core faculty in the Duke Center for Cognitive Neuroscience, the Duke Institute for Brain Sciences, and the Duke Center for Interdisciplinary Decision Sciences. He was born in Memphis, Tennessee, and attended the Hotchkiss School, Amherst College (B.A. 1977), and Yale University (Ph.D. 1982). He taught at Dartmouth College from 1981 to 2009 and moved to Duke University in 2010.

Valerie Hoffman, PhD, MPH, Mental Health Epidemiology

Valerie is passionate about improving the state of solutions developed to help those affected by mental health challenges. As the current CEO and Founder of Pogo Research, she works cross-functionally with digital behavioral health companies to understand their value by suggesting valid data assessments and variables to collect and analyze, as well as studies to conduct that will provide insights into how their solutions improve access, efficacy, satisfaction, equity, and cost.

She has authored over 100 publications in peer-reviewed journals and presented at over 100 national scientific conferences. She was the former VP of Woebot Health and Chief Research Officer at Meru Health and a psychiatric epidemiologist by training, earning an MPH from Yale University and a PhD from Johns Hopkins Bloomberg School of Public Health, where she was an NIMH predoctoral fellow.

Steve Duke, Insights and Strategy

Steve Duke is the founder of Hemingway, an organization supporting behavioral health innovators. He is also the author of The Hemingway Report, a newsletter providing industry analysis to thousands of behavioral health leaders. His work focuses on using data to understand the intersection of technology, markets, and clinical innovation, and translating that understanding into actionable insights. Before Hemingway, Steve worked as a management consultant at McKinsey and led growth teams at two tech unicorns (LetsGetChecked & Wayflyer).

Media Contacts:

Kristina McPherson kristina@growtherapy.com

*Coach is available to eligible adult clients. Availability may vary by location and insurance plan.